Stay Up to Date

Submit your email address to receive the latest industry and Aerospace America news.

GOES-R weather-data volume drives NOAA network

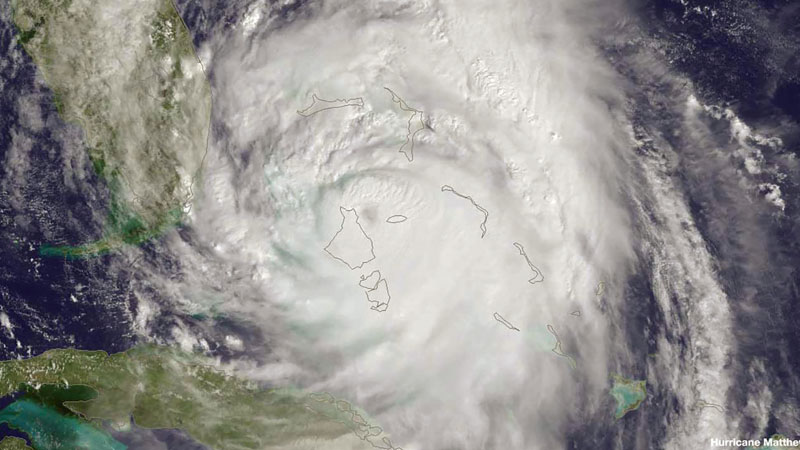

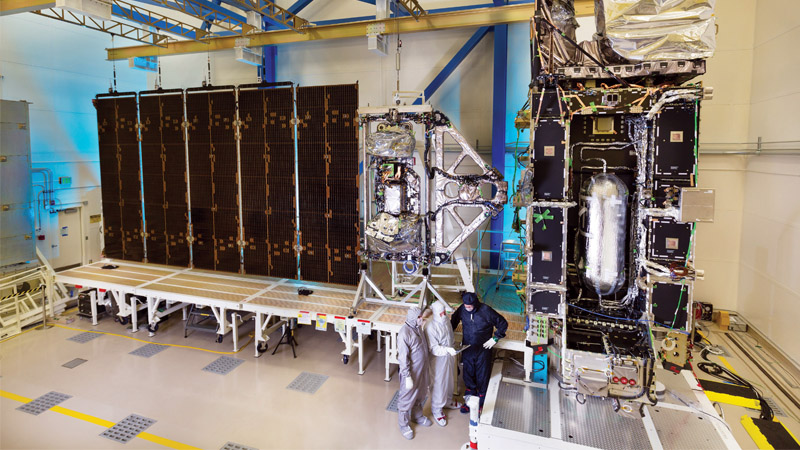

Our biggest challenge in developing the ground segment for NOAA’s new series of geosynchronous weather satellites was planning for the volume and speed of information compared to the existing Geostationary Operational Environmental Satellites. GOES-R has six instruments that will collect three times the number of spectral channels as the current satellites with a four-times-finer resolution. The data must be transmitted from the satellite five times faster. On the ground, 40 trillion floating point operations, or FLOPS, must be performed to transform this data into at least 3.5 terabytes of data products each day. That’s about the same amount of data as in 777 high-definition movies.

In space, a satellite in the current GOES-N series transmits sensor data at a rate of 2.62 million bits per second, while GOES-R, S and T will transmit sensor data at a rate of 120 mbps.

Like all geostationary satellites, the GOES-R series must have a team and ground system managing it; the computing power to create actionable information from the sensor data and the networking equipment to reliably distribute information to forecasters at the National Weather Service. The information must also be sent to data repositories for retrieval later and back to the satellite, where the spacecraft then broadcasts key data products to users in the Western Hemisphere who are equipped with GOES Re-Broadcast terminals.

For GOES-R, NOAA’s comprehensive system requirements gave us the design guidance for everything from the enterprise-level monitor and control of the system to the distribution of informational products for a long list of environmental conditions on Earth and also for solar events in space. An enormous volume of data must come down from the spacecraft with extremely low latency (within seconds of the instruments measuring the event). We must maintain very high availability rates to control mission management functions, meaning actions that maintain the health and safety of the satellites, and to control the weather sensors. Management data is critical for keeping remote sensing data flowing to the ground, and in fact, we must have less than two seconds of downtime per year for the key mission management functions. High-availability requirements are also in place for producing and delivering the most critical products such as cloud and moisture imagery. This was most challenging for our team.

We knew that a bottleneck of data would defeat the purpose of flying more sophisticated instruments on GOES-R, including the Advanced Baseline Imager. So we decided to customize the ground system design around the processing infrastructure. Unlike NOAA’s polar-orbiting weather satellites, GOES satellites transmit the data as the instrument captures it. Harris invested internal research and development funds to understand the problem and created multiple architectures for high throughput product processing that optimized the input and output of sensor and processed data among many computer servers. We benchmarked these architectures against each other to get to the right solution, which is one that will easily scale to greater and greater data rates and volumes to meet needs beyond GOES-R. We conducted rigorous end-to-end system testing with large volumes of test data to confirm the team’s design selection.

To meet the throughput and latency demands of GOES-R, the ground system will begin processing the data by applying weather product algorithms as soon as the data is received from the satellite without waiting for receipt of the full scene from the satellite and without waiting for data from an up-stream algorithm. With GOES-R, the algorithms send the data to the next algorithm to perform the next processing function. To support the flow of data through the system, the team developed a very creative caching approach to avoid input-output bottlenecks. High-speed memory rather than a disk communicates sensor and processed data to algorithms that need this data to execute algorithm code on high-performance computer servers.

Polar orbiting satellites have a somewhat different mission, one that requires capturing less data per unit of time. GOES-R and other geostationary satellites are positioned 35,000 kilometers above the equator to continuously stare at weather features as they develop to aid in nowcasting. By contrast, polar orbiters pass over those features at 800 kilometers viewing only about a 3,000-kilometer swath of the Earth versus the whole hemisphere. However, polar satellites will eventually cover the whole Earth, including the poles, and typically downlink their stored raw data every 50 minutes.

The architecture we created maintains data flow and minimizes latency or delays. The required latency for a solar event, in which measurements of electromagnetic radiation must be made, was particularly stringent: 1.8 seconds from the time the instrument makes the measurement to the time the data is provided to the Space Weather Prediction Center in Boulder, Colorado. Near-real time delivery is critical because solar flux data can harm our nation’s vital communications and navigation infrastructure. Having sufficient time to react is essential in safeguarding this infrastructure. As severe weather events increase, a reliable ground system with the ability to support forecasters’ needs is increasingly critical.

Given that the protection of lives and property are at stake, NOAA also required that the system be resistant to a single point of failure. NOAA told us which sites to use and for what, but we developed an enterprise management system to monitor and control execution at all sites concurrently. Those sites are Suitland, Maryland, Wallops, Virginia, and Fairmont, West Virginia. While the ground system sites are interconnected to operate as one system, they can operate independently from each other to offer failover redundancy.

Each enterprise system our team delivered to NOAA contains approximately 300 racks of computers and network equipment, about 160 kilometers of interconnecting cables, and six 16.4-meter antennas designed to operate in sustained winds of 110 miles per hour (177 kilometers per hour), or a strong Category 2 hurricane. Each of the three sites can handle the tremendous volume of data required to support the GOES-R mission, but also positions NOAA to support new missions well into the future.

Because it takes years to develop a satellite ground system on the scale of GOES-R, it is critical to imagine the future.

We needed to develop a system that could expand with science. The architecture is highly scalable to add computing capacity for future missions, and required algorithms can be updated without affecting ongoing operations.

Our work means meteorologists will know more about the early formation of storms than they know today, which will improve severe weather preparedness and curtail unnecessary evacuations. There will be more time to prepare for thunderstorms and tornadoes. Airlines will be able to better plan their flight routes. In the same amount of time it takes to upload a selfie, meteorologists will know whether to advise us to carry an umbrella – or not. ★

Related Posts

Stay Up to Date

Submit your email address to receive the latest industry and Aerospace America news.