Stay Up to Date

Submit your email address to receive the latest industry and Aerospace America news.

Unlike hurricanes and volcanic eruptions, earthquakes have resisted all efforts at forecasting. Is it folly or the future to think we can solve that problem? Adam Hadhazy explores how satellite instruments may be bringing earthquake forecasting closer to reality.

Millions of people in a megacity had just taken their first few bites of lunch when the earthquake sirens blared and text alerts went out. The metropolis was already on edge because of the warning issued two days prior, when ground sensors picked up highly elevated strain in a nearby fault line. And now satellites had just detected the telltale atmospheric signatures of an imminent quake.

With perhaps 30 minutes before the devastating shaking would begin, the city went on lockdown. Utilities turned off water and gas lines. The well-practiced populace crouched in the strongest structural portions of their dwellings and workplaces. When the major quake struck just as predicted, though property destruction was severe, few residents lost their lives that day.

Alas, earthquake prediction of this sort cannot be done today. Despite decades of scientific inquiry, the most advanced public warning systems can only send alerts after an earthquake has started, buying a precious few seconds for those away from the epicenter where heavy shaking has yet to reach.

There is renewed hope, however, that humanity need not remain at the mercy of the planet’s tectonic spasms. Increasingly powerful investigational abilities offered by Earth-observing satellites, coupled with sensor networks on terra firma, are advancing our knowledge of earthquake potentiation. Researchers are confident in delivering more precise statistics on the frequency of major quakes, allowing for better civic planning, infrastructure hardening and emergency preparedness.

Some scientists, meanwhile, still hold out hope for genuine, real-time prediction, like in our fictional scenario. Ask most seismologists, though, and they will flatly assert that pinpointing when an earthquake of a particular magnitude will rip forth is — and will always be — impossible. Unlike the clouds and air masses we can readily measure for meteorological outlooks, the opaque, solid ground underfoot might not offer any hints of what is to come.

We’ve learned how to forecast a lot of natural hazards, so for most people, it just stands to reason that there must be something that is predictable about earthquake occurrences,” says Michael Blanpied, the associate Earthquake Hazards Program coordinator for the U.S. Geological Survey. Seismology has explained how earthquakes start and propagate, why and where they occur, and what their catastrophic potential is. “But the one thing we have not figured out,” Blanpied adds, “is whether there’s any indication that the Earth provides about the timing of large earthquakes.”

Some scientists outside the seismological consensus think that our world does in fact whisper its subterranean secrets. “It’s ‘politically correct’ to say that there are no detectable precursors for earthquakes,” says Kosuke Heki, a geophysicist at Hokkaido University in Sapporo, Japan. Once a skeptic himself, Heki’s recent work on perhaps the most tantalizing and controversial precursor type, involving electromagnetic atmospheric anomalies, has altered his perspective. “What I have seen is quite convincing,” he says.

The mainstream skeptics and fringe optimists alike will have their convictions tested as never before by the vast amounts of interlinked information pouring in from sensors in, on and above our inconstant planet. “We now have the ability to analyze vast quantities of data very quickly,” says Blanpied. “That alone is giving us tools we didn’t have even 10 or 20 years ago.”

A sudden shuddering

In terms of pure lethality and economic tolls in modern times, no act of nature surpasses the earthquake. The razing of built structures on land, plus the deluge of a tsunami should an earthquake strike offshore, can kill staggering numbers of people. Recent examples include the Indian Ocean earthquake and tsunami in December 2004 (death toll: 280,000) and the January 2010 Haiti earthquake (death toll: 160,000). Earthquakes in China and Japan in the last quarter-century have cost more than even Hurricanes Katrina and Harvey.

The maturing science of seismology in the 20th century worked out that earthquakes happen when great slabs of rock in Earth’s crust violently slide past one another at boundaries called faults. Before these sudden shifts, the slabs press together, building up stresses that strain and deform their constituent material. Working out these underlying mechanics offered hope that such disasters might be foretold.

A prime example of how this promise spectacularly fizzled is a place that bills itself as the “earthquake capital of the world”: Parkfield, California. This tiny, unincorporated community — population 18 — is situated right on the San Andreas Fault a couple of hundred miles northwest of Los Angeles, where the infamous boundary between two tectonic plates wends through the Southern Coast Range mountains.

Between 1857 and 1966, the Parkfield area experienced several sizeable magnitude 6 earthquakes at remarkably regular intervals, averaging 22 years apart. Recognizing this, the USGS partnered with California’s state geological agency on the Parkfield Earthquake Experiment in the 1980s. Earthquake experts publicly agreed on there being a higher than 90 percent chance that a significant quake would rumble the region by 1993. At last, seismologists believed they would catch an earthquake in the act, ushering in an era of short-term prediction.

Scientists threw the seismological equivalent of the kitchen sink at Parkfield, peppering the landscape with tools-of-the-trade including seismometers, which measure ground motion; strainmeters, which measure ground deformation; creepmeters, amusingly named and which measure ground displacement; and magnetometers, which measure magnetic fields associated with ground stress. “Parkfield was identified as the best place to capture an earthquake,” says Blanpied. “It became the most heavily scientifically instrumented patch of earth on the planet.”

Also brought to bear, as it was becoming increasingly available for civilian use in the late 1980s, was the GPS constellation. Triangulating signals between a constellation of satellites and receivers on Earth establishes the receiver’s precise location. The technology is ideal for tracking the slow movements of expanses of Earth’s crust over time and, should an earthquake occur, measuring the displacement of receiver sensors, indicating just how much the ground shifted — all of which ties back into geophysical models of earthquake behavior.

As the science world watched and waited, though, Parkfield’s due-by earthquake date came and went. It was not until 2004 that a significant mag-6.0 temblor finally broke out. Worse still, there was no warning; the suite of instruments failed to register anything unusual.

A (space) bird’s-eye view

Even though the Parkfield experiment did not pan out, it helped pave the way for multifaceted earthquake study projects that increasingly use space instruments. One example is the Plate Boundary Observatory. It consists of 1,100 GPS sensors and other monitoring equipment primarily placed from Alaska’s Aleutian Islands through the western United States and down into Baja California. For more than a decade, the integrated sensor network has monitored the movements of the Pacific and North American tectonic plates, two of the giant jigsaw pieces that compose our planet’s crust and at whose fault boundaries some of the largest quakes can occur. The project’s funding is sunsetting this year, but continuing analysis of its data haul will seek clues about the long-term evolution of continent-scale landmasses and their associated seismic hazards.

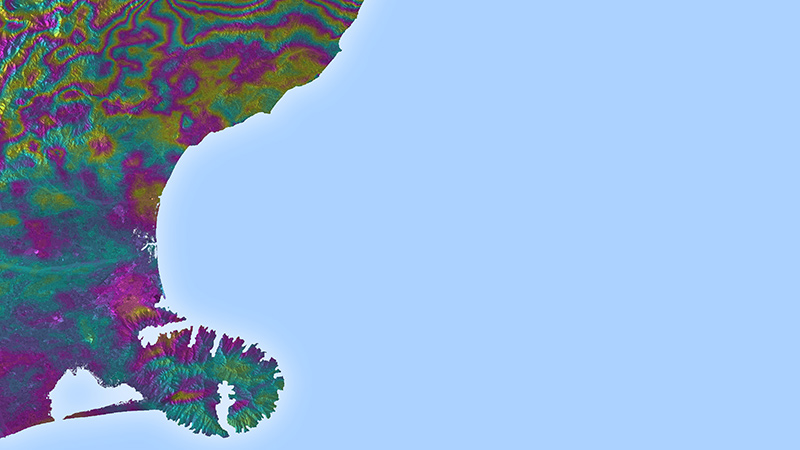

Another of these efforts is run by the United Kingdom-based Centre for Observation and Modelling of Earthquakes, Volcanoes and Tectonics, or COMET. The project receives data from the European Space Agency’s twin Sentinel-1 satellites, which were launched in 2014 and 2016 into polar orbits. The spacecraft scan the Earth in cloud-penetrating microwaves, generating 3-D maps via an Interferometric Synthetic Aperture Radar, or InSAR. It combines multiple radar images obtained at different times, tracking any movement and deformation of the ground to a sensitivity of a single millimeter over the span of a year — a dramatic improvement over satellite capabilities from the early 2000s. These observations reveal which areas of rock are elastically bending, storing up energy. When the bent rock eventually snaps back into place, it will unleash an earthquake.

“Satellite data has revolutionized this field,” says Alex Copley, a lecturer at the University of Cambridge who oversees the earthquakes and tectonics portion of COMET. By comparing the deformation data with the timing of past earthquakes, Copley says researchers can make very rough estimates of when the next major event in a location might occur. “This information can form the key input into developing earthquake-proof infrastructure and dramatically reduce the future loss of life from these events,” says Copley.

From ballparking to pinpointing

Besides honing traditional seismology’s probabilistic approach to long-term earthquake forecasting, satellites might just help crack open a window into more real-time, predictive approaches. Many anecdotal reports of earthquake precursors, including claims of unusual animal behavior such as elephants fleeing for higher ground, as well as aurora-like “earthquake lights,” have of course suffered for lack of eyewitness reliability.

Yet under satellites’ growingly sensitive and steady gaze, Japan’s Heki and others suggest that traditional seismologists may be looking in the wrong place. Somewhat counterintuitively, they should be looking hundreds of kilometers up in the sky where dangerous activity deep underground actually expresses itself. Heki’s research had initially focused on the atmospheric pressure changes and ionospheric disturbances wrought by earthquakes as they happen. While studying the monster 9.0 Tohoku earthquake that struck in March 2011 off the coast of Japan, Heki noticed something strange happening before the quake got underway. Forty minutes prior, GPS had recorded an increase in total electron content, the sum of electrons along the line of sight connecting a ground station to a satellite.

Now, Heki knew that total electron content is in constant flux due to spurts of geomagnetic activity, for instance. Curious, though, he looked back at historical data for a series of quakes over recent decades. Lesser quakes in the 6- and 7-magnitude range showed no anomalies over the portion of the atmosphere above what would become their epicenters. But major quakes with magnitudes above 8.0 often exhibited similar total electron count enhancements as Tohoku. Crucially, the strength of the anomaly and appearance times before quake initiation sunk or rose in tandem with magnitude, making it hard to chalk it all up to just natural electron count variation. “I’d never seen such a clear precursor before,” Heki says.

He is hardly alone in spotting puzzling pre-quake atmospheric activity with spacecraft. The Swarm for earthquake study, or SAFE project, relies on the European Space Agency’s three-satellite Swarm constellation. Launched in 2013, Swarm’s mission is to precisely measure Earth’s magnetic field, adding another earthquake investigatory angle to GPS and ground-mapping satellites. SAFE has reported on magnetic field and electron density anomalies appearing before several larger earthquakes in the last few years. “The results show that there is clear significant statistical correlation between these anomalies and the earthquakes,” says Angelo de Santis, the leader of SAFE and the director of research at the National Institute of Geophysics and Volcanology in Rome.

Connecting what’s above with what’s below

Numerous other reports of potential precursors continue cropping up in the literature. The USGS’ Blanpied agrees that some of these signals are “intriguing,” but that far more work needs to be done to flesh out the supposed mechanisms behind them. “People have made a lot of observations on the ground and from satellites and have tried to correlate those with the occurrences of large earthquakes,” he says. “What we lack right now are real, physical models that tie together what we expect to be happening in the ground with what we expect to be emitted from the surface.”

Heki and others do not think it implausible that subterranean seismic activity could have measurable effects a couple of hundred kilometers up into the ionosphere, the upper layer of Earth’s atmosphere where many anomalies are detected. One such proposed linkage is from the intense stressing of rocks ahead of an earthquake. Friedemann Freund, a senior researcher at NASA’s Ames Research Center in Mountain View, California, and an adjunct professor of physics at San Jose State University, has shown in the lab how stressed rocks can act like a semiconductor battery.

When compressed, chemical peroxy bonds in the rocks break, drawing in negatively charged electrons. A wave of positive electromagnetic charge then propagates as neighboring electrons keep sliding over to fill the just-created charge gaps. The pulses of charge generated in this manner in the lab are weak. But if scaled up to thousands of cubic kilometers of rock, the pulses might just extend through Earth’s surface and perturb the ionosphere. Freund’s model also calls for several consequences near ground level, including carbon monoxide production related to oxygen (ionized by the stressed rocks) oxidizing organic material in soil. In support, Freund points back to increased carbon monoxide levels at the bottom of the atmosphere detected by NASA’s Terra satellite prior to a 7.7-magnitude quake that hit Gujarat, India, in 2001.

As for earthquakes at sea, where the crustal rocks in question are separated from the atmosphere by hundreds of kilometers of water, Freund further suggests that flowing current in ocean beds could generate ultra-low frequency radio waves. These waves might likewise interact with the ionosphere, yielding the sorts of precursors Heki has potentially identified.

While it is all a bit speculative, ample scientific literature from around the world buttresses the concept of atmospheric earthquake precursors. Freund admits to having received “a lot of flak” for his ideas from the seismology community. But he thinks the field is too hung up on mechanical explanations for earthquake initiation and would benefit from a broader interdisciplinary approach, bringing in chemistry and other overlooked, potentially relevant subspecialties in physics. (Freund, aged 80, cut his teeth in materials science, studying defects in crystals that act like his pre-earthquake stressed rock.) “Seismologists say earthquakes cannot be predicted, because seismologists cannot predict them,” Freund says.

In the decades ahead, the deepening analyses of old earthquakes, as well as the plethora of data that unfortunately inevitable, new temblors will provide, should make humanity ultimately safer in the long run. Just maybe, through intensive monitoring at land, sea, and from space, earthquakes could become as predictable as a severe thunderstorm.

“I would say take an open mind to it,” says Blanpied. “Take advantage of that massive amount of data and the fantastic earthquake catalogs we now have, really do the numbers, and see what comes out. It may be surprising.”

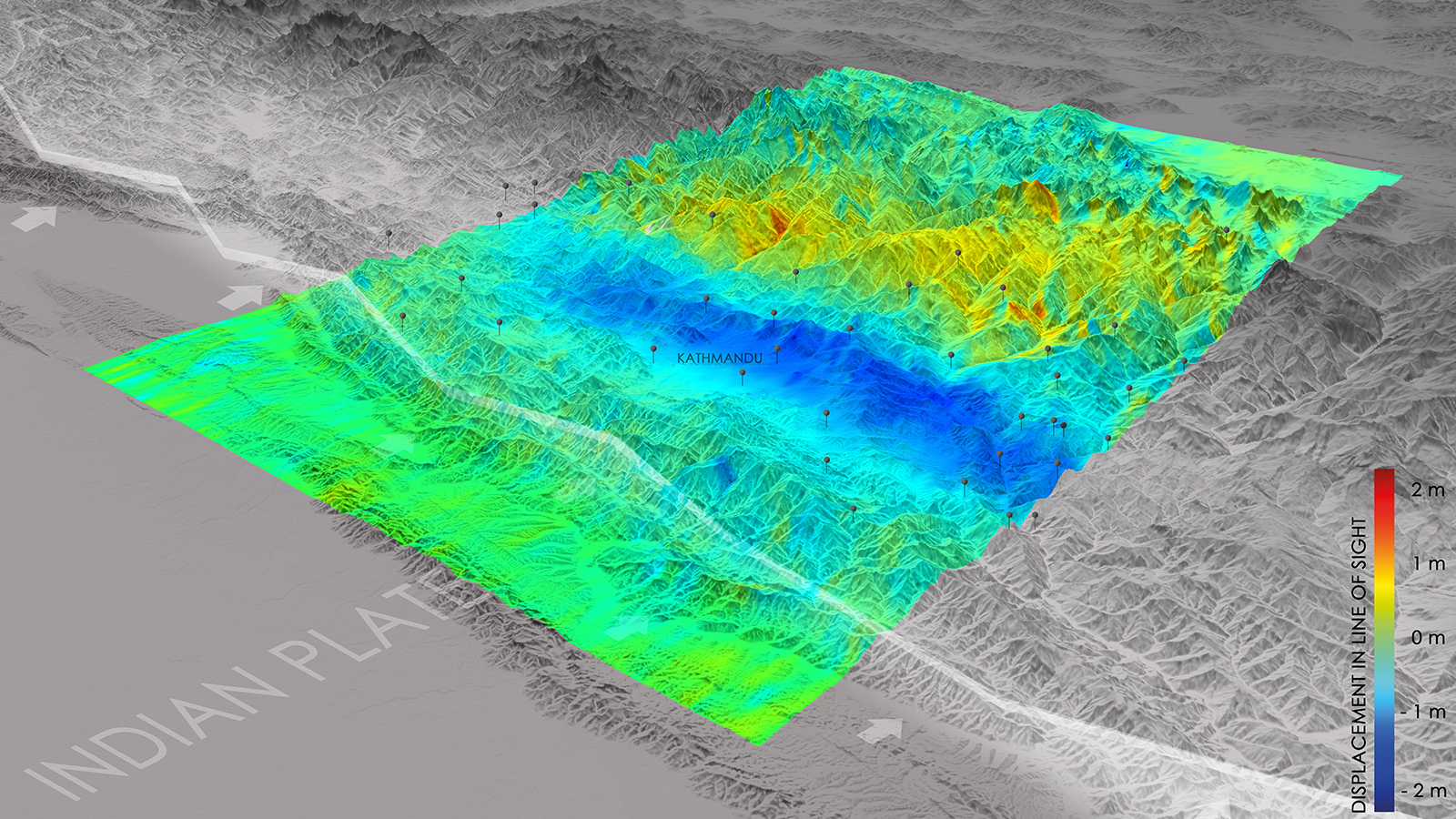

The European Space Agency image at the top of this page shows the lay-of-the-land changes captured by Europe’s Sentinel-1A satellite after a 2015 quake in Nepal. The white line running diagonally through the image is the fault line between the Eurasian (top) and the Indian tectonic plates. Blue areas indicate uplift of 0.8 meters toward the satellite, while yellow indicates gradual sinking.

INDUCED EARTHQUAKES

A group of scientists created the Human-induced Earthquake Database, or HiQuake, to track earthquakes that were caused at least partially by human activities, such as mining and water reservoirs.

About Adam Hadhazy

Adam writes about astrophysics and technology. His work has appeared in Discover and New Scientist magazines.

Related Posts

Stay Up to Date

Submit your email address to receive the latest industry and Aerospace America news.